Introducing the new, improved RecordSearch Data Scraper!

It was way back in 2009 that I created my first scraper for getting machine-readable data out of the National Archives of Australia’s online database, RecordSearch. Since then I’ve used versions of this scraper in a number of different projects such as The Real Face of White Australia, Closed Access, and Redacted (including the recent update). The scraper is also embedded in many of the notebooks that I’ve created for the RecordSearch section of the GLAM Workbench.

However, the scraper was showing its age. The main problem was that one of its dependencies, Robobrowser, is no longer maintained. This made it difficult to update. I’d put off a major rewrite, thinking that RecordSearch itself might be getting a much-needed overhaul, but I could wait no longer. Introducing the brand new RecordSearch Data Scraper.

Just like the old version, the new scraper delivers machine-readable data relating to Items, Series and Agencies – both from individual records, and search results. It also adds a little extra to the basic metadata, for example, if an Item is digitised, the data includes the number of pages in the file. Series records can include the number of digitised files, and the breakdown of files by access category.

The new scraper adds in some additional search parameters for Series and Agencies. It also makes use of a simple caching system to improve speed and efficiency. RecordSearch makes use of an odd assortment of sessions, redirects, and hidden forms, which make scraping a challenge. Hopefully I’ve nailed down the idiosyncrasies, but I expect to catching bugs for a while.

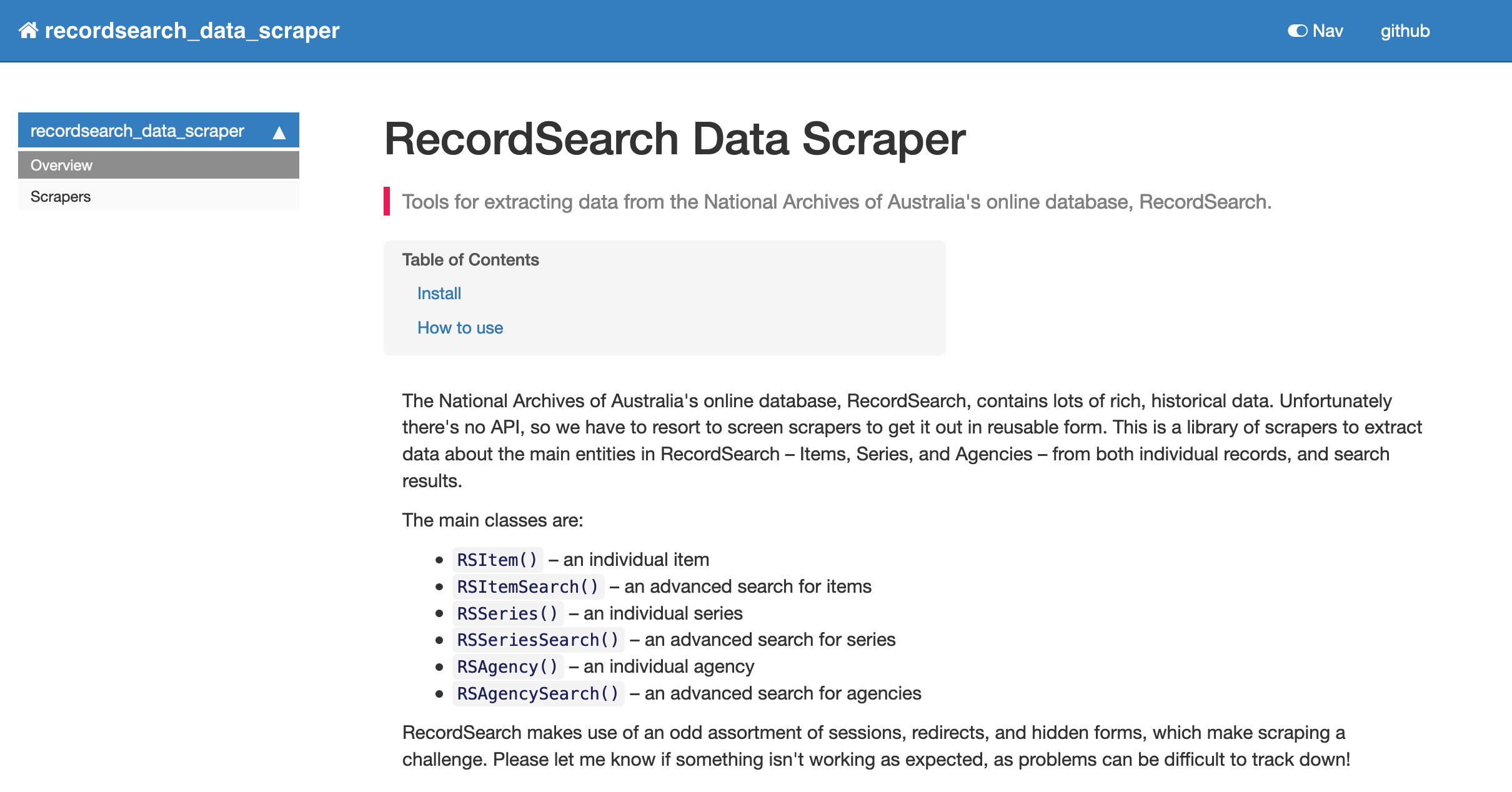

I created the new scraper in Jupyter using NBDev. NBDev helps you to keep your code, examples, tests, and documentation all together in Jupyter notebooks. When you’re ready, it converts the code from the notebooks into distributable Python libraries, runs all your tests, and builds a documentation site. It’s very cool.

Having updated the scraper, I now need to update the notebooks in the GLAM Workbench – more on that soon. The maintenance never ends! #dhhacks